Government Survey & Engagement Software for Inclusive Public Input

At the end of every public comment period, there’s a question agencies should be able to answer: did we actually hear from the people this decision affects?

For many agencies, that question is harder to answer than it should be. Not because the effort wasn’t there, but because the tools used to collect input weren’t built to reach a full community. Online-only surveys miss residents without reliable internet. English-only forms miss non-English speakers. The result is a participation record that reflects who could easily respond, not who had the most at stake.

Government survey and engagement software built for multi-channel input is designed to close that gap, so the answer to that question can be yes.

What Is Government Survey & Engagement Software?

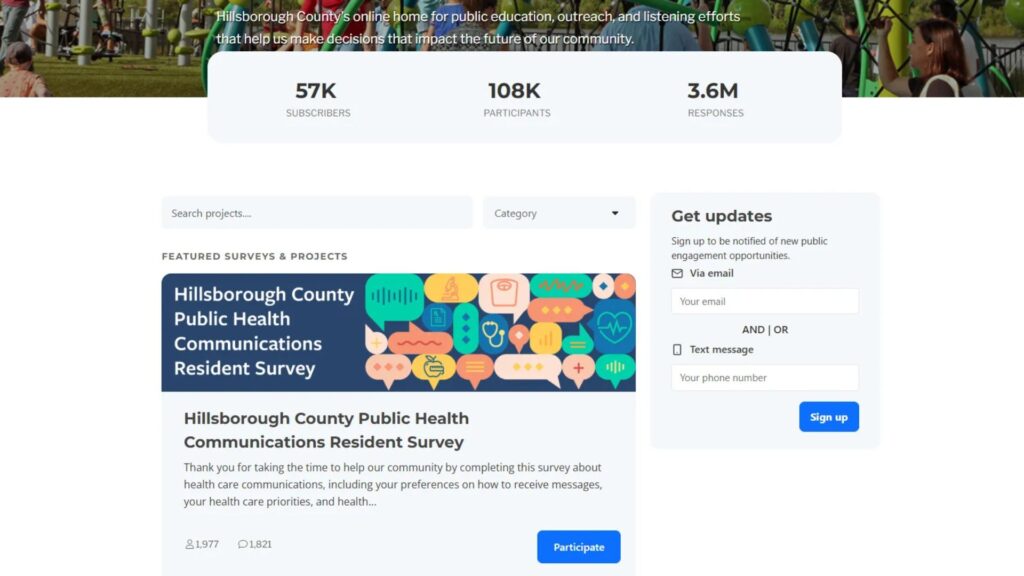

Government survey and engagement software is a multi-channel platform built to collect structured and open-ended public input across the full lifecycle of a government project or initiative . It goes well beyond an online form. It includes engagement portals, project pages, interactive maps, subscription forms, SMS surveys, voicemail input, in-person tools, and the structured record-keeping that connects all of it.

The defining difference from consumer tools is design intent. Government engagement software is built around compliance, socioeconomic representation, and record integrity, not response volume or ease of setup. It assumes that an agency needs to know not just what people said, but who said it, through which channel, in relation to which project, and whether the full range of affected residents had a realistic opportunity to participate.

That distinction shapes everything from how input is collected to how it can be reported and defended later.

Where Consumer Survey Tools Fall Short

Consumer survey tools were designed for internal team feedback, event registrations, and informal polling. They do that well. However, government engagement processes have different requirements, and the gaps are structural.

- Access Gaps: Online-only formats exclude residents without reliable internet access or devices. This isn’t a fringe issue, it disproportionately impacts the same communities most affected by infrastructure, housing, and transportation decisions.

- Multilingual Limitations: There’s no native multilingual support. Agencies must manage translation manually, introducing inconsistency and creating gaps in the official record.

- Lack of Project Context: Surveys exist as standalone events. Responses aren’t tied to a project record, timeline, or formal comment period, making documentation and defensibility harder later.

- No Representation Insight: Response counts show volume, not representation. There’s no built-in way to understand who participated, where gaps exist, or how input varies across demographics or geography.

The question isn’t whether you got responses. It’s whether the responses represent the people affected by your decision.

The Channels Resident Engagement Actually Require

Different residents participate through different channels. That isn’t a preference. For many people, it’s a constraint. A feedback strategy that doesn’t account for that will systematically underrepresent the populations it most needs to hear from.

Government survey and project tools form the core: structured project pages and surveys that tie every response to a specific project, channel, and timestamp. Input is captured in context from the start, not reconstructed later.

- Interactive maps: Add a spatial dimension that matters for transportation, land use, and infrastructure projects. Residents can indicate specific locations, corridors, or areas of concern in ways that a standard survey question can’t capture.

- SMS and text surveys: Reach residents who don’t have smartphones or reliable broadband, but do have a basic mobile phone. For many lower-income and rural communities, this isn’t an alternative channel. It’s the primary one.

- Voicemail and phone input: Serves populations who prefer or rely on phone communication, including seniors, residents with lower digital literacy, and those for whom written input in a second language presents a barrier.

- In-person and kiosk tools: Extend reach to community events, libraries, transit stations, and neighborhood centers, bringing the feedback process to where people already are rather than requiring them to seek it out.

- Subscription forms: Capture open-ended input in a format that can be reviewed, organized, and included in a formal record.

Multi-channel isn’t a feature upgrade. It’s the difference between hearing from your whole community and hearing from the easiest part of it.

PublicInput brings every feedback channel, including surveys, maps, SMS, voicemail, and in-person tools, into a single project record.

Online and offline resident outreach ties all of these channels together into a coordinated outreach strategy, so agencies aren’t just opening channels, they’re actively reaching the residents who are hardest to engage through digital-only approaches.

What Makes Feedback Data Defensible

Collecting input is half the job. The other half is being able to stand behind it when the process is questioned.

Defensible public feedback has input tied to a named project with timestamps and source channel recorded for every response. It has demographic and geographic cross-tab reporting that shows who was reached and, just as importantly, where participation gaps exist. It has open-ended comments structured and categorized, not sitting in a raw text export that no one can practically review. And it has a complete record exportable in formats suitable for legal, regulatory, or open records review.

This matters when a project decision gets challenged. It also matters for the quieter but equally frequent question that comes from leadership, elected officials, or community advocates: did you actually hear from the people this affects?

Public engagement analytics and reporting turns that raw input into structured, reportable insight, with demographic breakdowns, geographic analysis, theme clustering, and documentation that decision-makers can use and auditors can review.

Equity and Legal Obligations

For many agencies, multi-channel feedback isn’t just a best practice. It’s a legal requirement.

Title VI of the Civil Rights Act and the federal Limited English Proficiency guidelines require meaningful access for communities where a significant portion of residents aren’t primarily English-speaking. ADA obligations extend to digital input tools. Broadband gaps are well-documented across demographic and geographic lines.

Local governments, transit agencies, DOTs, and MPO engagement teams are all operating under frameworks that make inclusive participation a compliance matter, not just an aspiration. The channel strategy isn’t separable from the compliance strategy.

A Higher Standard for Public Input

Agencies doing this well aren’t necessarily the ones with the largest budgets. They’re the ones with a process that makes it possible to answer, with confidence, whether the community was genuinely heard.

The right software doesn’t make feedback easy. Reaching a full and representative community is genuinely complex, and no platform changes that. What it does is make the complexity manageable, the record defensible, and the outcomes more equitable.

The goal isn’t more responses. It’s the right responses, from the right people, through the channels that actually reach them.

If your agency is managing public feedback across disconnected tools, there’s a better way to work. See how government survey and engagement software built for multi-channel input makes inclusive participation achievable without tripling staff workload.