Better together: IAP2 Best Practices and Public Participation Software

The IAP2 Spectrum of Public Participation

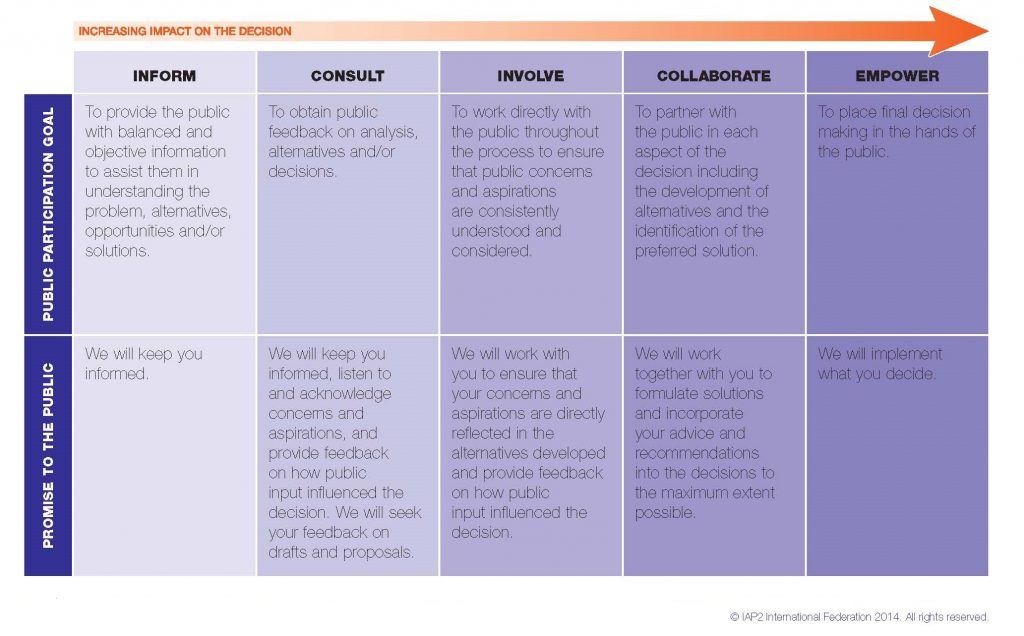

When public involvement practitioners are looking for best practices, they often turn to IAP2 – the International Association for Public Participation. The IAP2 Spectrum of Public Participation lays out a trusted framework for defining the public’s role in the public participation process. With an integrated public engagement software it is possible to execute, and most importantly, build, upon each step along the way.

The IAP2 Spectrum categorizes Public Participation into five distinct categories:

- Inform

- Consult

- Involve

- Collaborate

- Empower

Inform

Promise to the community: We will keep you informed.

To keep the public informed, described central issues in plain terms. Showing visuals that illustrate alternatives while providing PDF documents or even a short video to briefly describe the issues at hand ensures that the public is educated on the project.

Myth: We shouldn’t ask questions if our intent is to inform.

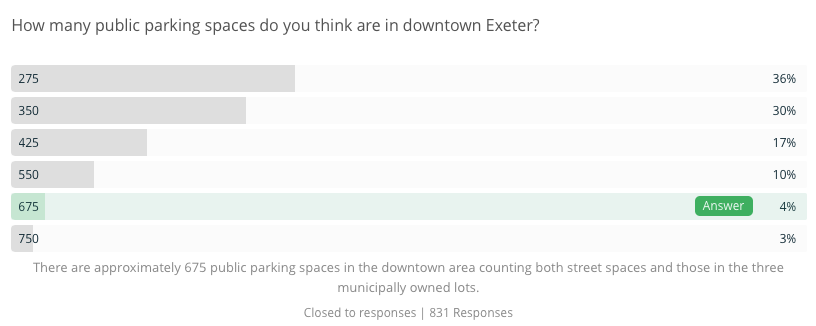

The truth is, a well-designed question can be the ultimate tool for raising awareness. One example we love was the Rockingham Planning Commission’s usage of a question about parking spaces to inform the public and address misconceptions on an issue:

In Durham, NC planners conducted an affordable housing survey with questions designed to address misconceptions about duplexes and triplexes. Only 18% were initially able to identify a duplex in a lineup:

Another opportunity ‘Inform’ processes offer is a chance to collect contact information every time a resident interacts with us. A simple best practice is including an embedded signup form (or in-meeting registration) where residents can provide their preferred means of communication. With each touchpoint you’re building on your public participation database, even if you’re not actively seeking input.

Ask:

- Does my toolkit support question formats with correct answer feedback?

- Can my toolkit leverage highly-visual questions to address common misconceptions?

- Can my participation software collect multiple contact formats (email, phone, SMS, address, social) and follow-up with residents throughout the process?

Consult

Promise to the community: We will keep you informed, listen to and acknowledge concerns and aspirations, and provide feedback on how public input influenced the decision. We will seek your feedback on drafts and proposals.

After overcoming common public involvement hurdles like getting the word out and increasing equity, a new challenge often appears:

Myth: We don’t have the time or staff to accept more public comments than we already get.

The right public involvement software should reduce the effort required to collect input and follow up with people. The key is shifting from siloed tools and processes, to public participation software designed to analyze all public comments—however they are collected— and help you batch your communications by common theme or group.

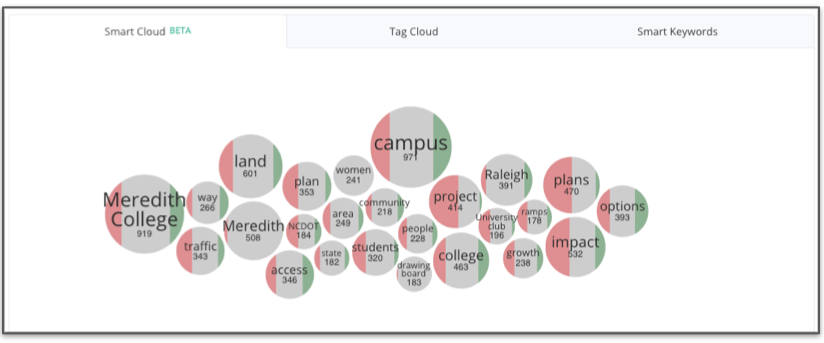

One tool that’s changing the game is machine-first qualitative analysis. Teams using PublicInput’s integrated machine learning analysis for comments receive visual groupings of major comment themes, which can then be explored and refined by staff:

From here, comment tagging rules can be setup to assist with the previously mundane task of hand-coding comments one at a time.

Ultimately, saving time on analysis is a key requirement when choosing engagement software.

Ask:

- Does my participation software automatically group similar comments for faster analysis?

- Do I have integrated email and text messaging tools to streamline my replies to participants?

- Can I setup re-usable comment coding/tagging rules, or do I have to manually tag each comment?

Involve

Promise to the community: We will work with you to ensure that your concerns and aspirations are directly reflected in the alternatives developed and provide feedback on how public input influenced the decision.

Myth: The only difference between ‘Consult’ and ‘Involve’ is the extra follow-up step.

Closing the feedback loop is a crucial predictor of public trust, and with the ‘Involve’ format it’s even more important to reiterate what we’ve heard and how it informs outcomes – often iteratively.

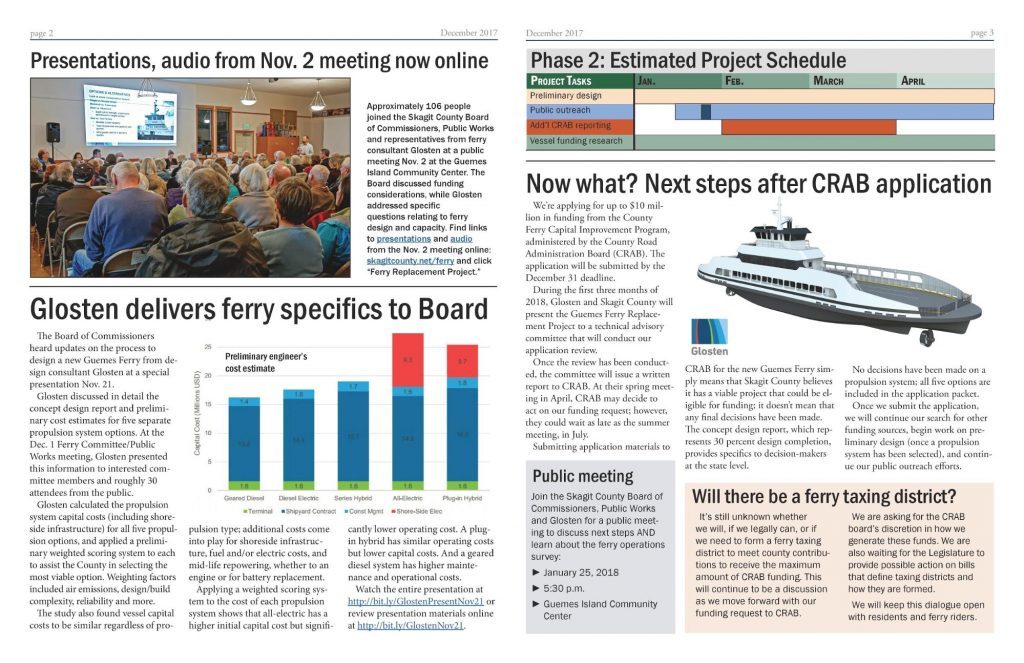

A great example is Skagit County Washington’s effort to design a new ferry to Guemes Island. After engaging residents with multiple methods, their team followed up with specifics about the feedback they received and what it meant for the design process:

Ask:

- Can I easily follow up with each participant via their preferred communication format?

- Can my toolkit create and distribute visualizations to explain key themes and results?

- Can I tailor my messaging to participants based on their initial response?

These things were front of mind when we chose to invest in building an integrated email communications toolkit into Public Input. Here’s a quick illustration of building an email with an embedded follow-up question:

Collaborate

Promise to the community: We will work together with you to formulate solutions and incorporate your advice and recommendations into the decisions to the maximum extent possible.

Community feedback plays a role in every project, but to what extent? We often want to know what residents think of a pending issue, and then let that inform—but not necessarily determine—the final decision.

Myth: Collaboration is time consuming and can place critical projects at risk.

What if the opposite were true? In Austin, tensions ran high when a Major League Soccer team announced it intended to partner with the city to build a new stadium. Opponents raised concerns about the use of public land, gentrification, and increased traffic.

When there are millions of dollars in economic development on the line, a knee-jerk reaction might be to downplay concerns and the role of the public in a final decision. Yet the communications team recognized that a lack of public involvement posed a greater risk than involving the public in the decision.

Partnering with economic development leaders, the communications team led a broad outreach effort. They asked about public support for a new stadium and what benefits would be expected from the team in exchange for use of city land.

The end result was a clear voice of public support for the project, along with key investments expected from the team. Prioritize included things like workforce development, youth programs, and investments in transportation improvements. These items were then incorporated into negotiations with team owners.

When a vote on the project reached city council, over 100 people arrived at the meeting to voice their opposition. Was the project destined for an early demise, or a multi-month quagmire of committee meetings? Not this time.

Council had clear data from over a thousand residents who had their concerns heard and addressed – 84% of whom now supported the project. The project moved forward and is expected to break ground in September of 2019.

Ask:

- Does my toolkit support question formats like prioritization to get past singular viewpoints?

- Can my toolkit conduct targeted outreach (e.g. geofencing) to reach people most impacted by decisions?

- Can I provide stakeholders and elected officials clear metrics and data showing the desires of their constituents?

We’d recommend the Austin MLS case study as a great testament to the ROI of collaborative engagement:

Empower

Promise to the community: We will implement what you decide.

This format of engagement strikes terror into the hearts of many well-meaning elected officials and department heads. The mere thought of ceding full control over important decisions like budgets, operations, and capital projects can feel daunting. But those fears are grounded in a misunderstanding of what public participation is all about.

Myth: Empowerment is the ultimate goal of great public engagement practitioners.

In truth, empowerment is yet one more approach to engagement. While it’s probably not a good fit for determining wastewater treatment protocols or the curb depth of public streets, it can be can be a powerful lever to build public trust in the right context.

For example, Virginia Beach gave the public complete decision making power of which mural should be painted on a staircase in a popular public park. Using a survey question with visuals, residents were able to give their feedback with one click. The effort engaged over 7,300 residents, many of whom signed up for ongoing updates from the city.

Ask:

- Does our toolkit have security features to prevent “ballot box stuffing” attempts.

- If we’re concerned that one group has outsize influence, can our software help us reach a broader set of voices?

- If we want to give more weight to specific stakeholder voices, can our software segment and group participants?

With those three things answered affirmatively, you can de-risk this approach and tap into its power to increase engagement and public trust. We recommend the Virginia Beach case study, covering engagement from Public Art to Disaster Relief:

Better together: IAP2 best practices + Public Participation software

With the growing role of technology in engagement, it is important that we not make the public participation process harder by adding yet another siloed tool for email, text messaging, online surveys, social media, and meeting participation.

Together, technology and a best practices should move us into a new paradigm of meeting people where they are. By combining our efforts into a cohesive process, we can make participation more equitable, accessible, and meaningful for all.